.NET Native AoT Make AWS Lambda Function Go Brrr

Upgrading a .NET AWS Lambda function to use native AoT for improved cold-start performance by over 85%.

Since 2017 I’ve been maintaining an Alexa skill, London Travel, that provides real-time information about the status of London Underground, London Overground, and the DLR (and the Elizabeth Line). The skill is an AWS Lambda function, originally implemented in Node.js, but since 2019 it has been implemented in .NET.

The skill uses the Alexa Skills SDK for .NET to handle the interactions with Alexa, and since earlier this month has been running on .NET 8.0.0.

I’ve been using a custom runtime for the Lambda function instead of the .NET managed runtime. The main reason for this is that it lets me use any version of .NET, not just the ones that AWS support. This has allowed me to not only use pre-release versions of .NET for testing, but it also enables me to use the latest versions of .NET as soon as they are released. The only disadvantage of this approach is that I have to patch the version of .NET being used once a month for Patch Tuesday, but I have automation set up to do that for me, so the overhead of doing that is actually minimal 😎.

As part of the .NET 8 release, the .NET team has put a lot of effort into improving the breadth of the capability of the native AoT support. With .NET 8, many more use cases are supported for AoT, making the performance and size benefits of AoT available to more applications than before. The .NET team at AWS has also been working hard on ensuring that the various AWS SDK libraries are compatible with AoT, with the various NuGet packages now annotated (and tested) as being AoT compatible.

With all these changes, I was curious to see how much of a difference AoT would make to the performance of my Lambda function, so I decided to try it out. In this post I’ll go through what I needed to change to allow publishing my Alexa skill as a native application, what I learned along the way, and the results of the changes to the function’s runtime performance.

TL;DR: It’s faster, smaller, and cheaper to run. 🚀🔥

Let’s dive in!

Publishing as AoT

The first step to migrating an application to native AoT is to turn it on and see what breaks.

This is easy to do with the .NET 8 SDK - all that’s needed is to enable the PublishAot property in the application’s project file and then to build it. This will then (unless you’re very lucky) generate a bunch of compiler warnings, letting you know which of your dependencies are not compatible with AoT (if any), as well as what APIs your code uses that aren’t AoT compatible.

For my skill, the two main culprits of AoT incompatibilies were the Microsoft.ApplicationInsights and Newtonsoft.Json NuGet packages.

I haven’t looked at the Application Insigts metrics generated by the skill for a long time, and the team have no plans to support it, so the path forward there was pretty easy - I just removed it.

Removing Newtonsoft.Json is a much more involved proposition though…

Removing Newtonsoft.Json

The Alexa.NET NuGet package that I used to implement most of the code for interacting with Alexa uses the ubiquitous Newtonsoft.Json library for serializing and deserializing JSON. As well as Newtonsoft.Json not natively supporting asynchronous code, it also isn’t AoT compatible. Alexa.NET uses a lot of advanced features of Newtonsoft.Json, such as polymorphic (de)serialization which is very incompatible with native AoT, so I knew that migrating away from it in Alexa.NET wouldn’t be an easy task.

Despite that, being a good open source citizen, I raised an issue to see if the maintainers of Alexa.NET would be interested in supporting AoT. Due to the amount of work required to move over to System.Text.Json (and the ripple effect that would have on their extension ecosystem), the maintainers weren’t interested in supporting AoT. That’s fair, but also it meant that as I’d need to tackle the whole problem myself, the timescales to do the upgrade were now entirely in my hands.

The bulk of the work to remove Newtonsoft.Json involved adding a bunch of classes to handle deserializing the requests passed to the skill by the Alexa service, and then to correctly serializes the responses to respond to the user. You can see the full set of changes in this this commit. In summary, the changes were:

- Add classes for the JSON request and response objects and wire them up to the JSON source generator;

- Switch from having the Lambda use the

Amazon.Lambda.Serialization.JsonNuGet package toAmazon.Lambda.Serialization.SystemTextJsonso that it used the JSON source-generated classes (via theSourceGeneratorLambdaJsonSerializer<T>class); - Adding code to map between these new classes and the Alexa.NET types for generating the SSML responses to respond to the user.

All-in-all this was the most time-consuming part of the migration, but most of it was all very boiler-plate code. Originally I tried to replicate the polymorphism that Alexa.NET used for the various Alexa request and response objects, but unfortunately I hit this limitation of the System.Text.Json serializer where the type descriminator needs to be the first property of the object being deserialized. As this isn’t the case with the Alexa requests and cannot be changed, there was no way to work around this. 🥲

For now I’ve removed the polymorphism, and I just created a single object with all of the properties I needed as one big object, using only the properties relevant to a specific type of request. I hope that in the future this is something that gets extended in some way in the serializer so I can make the POCOs a bit nicer and restore the original code.

Using Invariant Globalization

Another constraint of native AoT is that if you use globalisation features, say for DateTime formatting, you also need to include the ICU libraries via a NuGet package and enable App-local ICU. This is because the provided.al2023 custom runtime based on Amazon Linux 2023 does not include the ICU libraries by default.

The downside of adding this is that it adds a lot of size to the published application - in my case, it doubled the size of the published artifacts for native AoT. Taking a look around in my code, I wasn’t really actually using any features that needed culture-specific formatting support, so I was able to remove App-local ICU and use invariant globalization instead (InvariantGlobalization=true).

Switch from Graviton (arm64) to x86_64

The Lambda was running on AWS Graviton (arm64) before the migration to AoT, but a limitation of native AoT is that there is no support for cross-platform compilation of native AoT applications.

I thought the same was also true for cross-architecture compilation, but we’ll come back to that later when I’ve properly read the documentation… ⌛

For the initial AoT support, rather than get into the weeds of trying to compile for arm64 on the GitHub Actions Ubuntu x64 runners I use for CI/CD, I switched the Lambda back to using x86_64 to compile the application for the same architecture as used in GitHub Actions. To get things moving, switching the architecture was the easiest path forward. GitHub are also planning to add support for Arm-based hosted runners in the future, so once those become generally available any extra steps to work around these limitations should go away.

AoT Publishing Improvements

I incrementally deployed the changes in my pull request to test and measure the impact of different changes as I was going along. For example, I experimented with reducing my Lambda’s memory from 192 MB to the minimum supported by AWS Lambda, which is 128 MB, but that didn’t actually improve the performance. This is because the memory setting for AWS Lambda functions also increases the CPU allocated to your function. So while reducing the memory was possible because the function was well within the allowance, the CPU performance was also reduced, meaning that the overall time to service requests was actually longer, which makes the requests more expensive. In this case, 192 MB is the better balance of memory and performance in terms of overall cost.

With all of the changes above made, I was able to merge the pull request and deploy the Lambda to my production environment. As expected, the switch to native AoT improved the performance of the function, particularly the cold-start performance, but I was surprised by just how much things improved.

The numbers below aren’t scientifically rigourous as I just compared a few cold-starts before and after the change and noted the CloudWatch metrics associated with them, but as you can see from the summary table below the gains are pretty significant, with all the metrics improving by over 60%! 🤯

| Metric | Before AoT | After AoT | Change |

| Published Size | 123 MB | 39.1 MB | -68% |

| Published Files | 240 | 4 | -98% |

| Executable Size | - | 13.4 MB | - |

| Duration | 3092.87 ms | 219.74 ms | -93% |

| Billed Duration | 3817 ms | 350 ms | -91% |

| Memory Size | 192 MB | 192 MB | - |

| Max Memory Used | 89 MB | 31 MB | -65% |

| Init Duration | 723.62 ms | 129.42 ms | -82% |

The Published Size metric includes the symbols for the application to make stack traces more useful and two configuration files. The symbols could be omitted to reduce the size further. The Executable Size metric shows the size of the skill’s compiled executable file.

Switching Back to Graviton

With the native AoT changes deployed, I wanted to switch back to using the Graviton architecture to see if there was any difference in performance between the two. Graviton, on a like-for-like basis, is also cheaper than x86_64, and switching back gets me to the status quo of before switching to native AoT.

The easiest way to achieve this, I figured, was to publish the Lambda function in a Docker image in my GitHub Actions workflow, and then output the compiled application back to the hosted runner, then publish it as with my existing CD workflow.

With the help of my colleague Hugo Woodiwiss who’d already been experimenting with cross-architecture compilation for AWS Lambda, I was able to add a Dockerfile and make trivial changes to my GitHub Actions CI/CD workflow in this pull request to do just that.

Once merged and deployed I took another unscientific sample of the performance from CloudWatch to see the result of the changes. I was suprised to find that many of the metrics actually went backwards compared to the x86_64 version - only the function initialisation duration improved, as you can see in the table below.

| Metric | x86_64 | arm64 | Change |

| Published Size | 39.1 MB | 39.2 MB | +0.2% |

| Executable Size | 13.4 MB | 13.9 MB | +3.7% |

| Duration | 219.74 ms | 357.59 ms | +62.7% |

| Billed Duration | 350 ms | 471 ms | +34.6% |

| Memory Size | 192 MB | 192 MB | - |

| Max Memory Used | 31 MB | 32 MB | +3.2% |

| Init Duration | 129.42 ms | 112.44 ms | -13.1% |

I’m not sure why the performance isn’t as good on arm64, but the numbers are still much better than without native AoT. It’s of course perfectly possible that the performance is better and my non-scientific performance measurement just found an unfavourable result. I’m going to stay on Graviton though rather than switch back again, as it’s cheaper and I’m not seeing any significant difference in performance (and cost) for my use case and the usage my skill gets.

Maybe once it’s been running for a month and I get my next AWS bill I can take a look comparing month-to-month to see if what the difference is in cost and metrics. To be honest though, I imagine I won’t see a difference between x86_64 and arm64 in terms of cost, as the difference will be dwarfed by the decrease in cost from switching to native AoT. 📉💷

Removing Docker

So while writing this blog post and reading the native AoT documentation for cross-compilation again, I discovered that while cross-platform compilation isn’t supported, there is limited support for cross-architecture compilation. There’s an even a copy-paste example of how to set it up for Ubuntu 22.04, which is what the GitHub Actions ubuntu-latest hosted runner uses. Interesting. 😈

This means that I can actually compile the application for arm64 on the GitHub Actions x86_64 runners, which means that the changes I made to use Docker are actually redundant - that’s even better! I tested it out using the steps in the documentation with this pull request and it seemed to work perfectly fine, just the same as when compiled in an arm64 Docker container.

I’ve now removed the Dockerfile and the changes to the GitHub Actions workflow, and the Lambda is now back to being published as a native application directly from the GitHub Actions hosted runner for ubuntu-latest, just as it was before migrating to native AoT. 💫

Summary

All-in-all it was a fun learning experience converting my first .NET application to use native AoT. I learned about some of the limitations of native AoT, and in many cases how to covercome them. The net result of the changes are that my Lambda function is now faster, smaller, and cheaper to run that ever before - thanks .NET team!

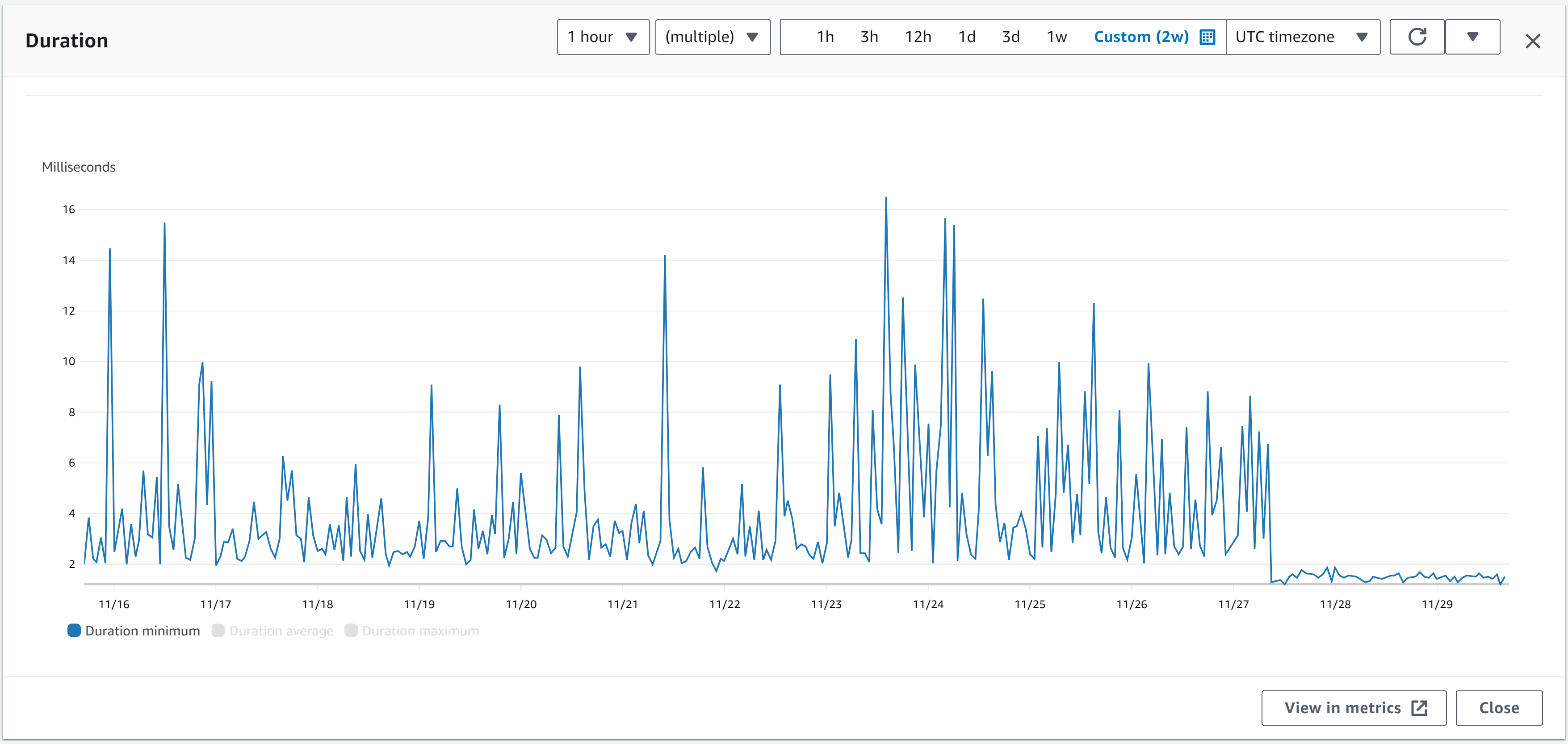

I think this image of the Duration minimum metric from CloudWatch for the Lambda function over the last 2 weeks says it all. Spot when the changes were deployed… 🚀

To summarise, the net effect of converting my Alexa skill’s Lambda function to use native AoT are:

- Faster: The Lambda function is now faster to start and respond to requests, with the cold-start time reduced by 84% and the billed duration reduced by 88%. 🏎️

- Leaner: The Lambda function is now leaner, with maximum memory used on cold-start down to 32 MB for a saving of 64%. 🏋️

- Smaller: The size of the published Lambda function is now 68% smaller, at just 39.2 MB for a reduction of 68%. The total size of the ZIP file deployed to AWS Lambda is 12.4 MB. 🤏

Of course your mileage for a different application may vary, but depending on how many warnings you get from trying to turn on native AoT and the overall cost of the effort of fixing them, I would have thought that the majority of AWS Lambda .NET workloads could see significant benefit from switching to deploy with native AoT.

The biggest barrier for adoption right now, if you don’t already use one, is the need to use a custom runtime. Here’s hoping that early in 2024 the AWS Lambda team will launch a managed runtime for .NET 8 and more applications will be able to benefit from native AoT with most of their existing Lambda runtime configuration settings for .NET 6.

I have a hunch that native AoT is going to become more of a thing over the next few years in the .NET ecosystem and that, like strong-naming, it’s going to become viral and something that the commuity will start asking for support for it more and more within packages published to NuGet.org that our applications are built on top of.

I hope that in the .NET 9 timeframe ASP.NET Core gets further support for native AoT, such as for Razor Pages, so that I can convert even more of my deployed applications to benefit from the performance and cost improvements that native AoT brings.

I hope that you’ve found this blog post interesting, and it helps you migrate some of your workloads to benefit fom native AoT and the improved performance it can bring to your applications. 🚀🔥