Martin Costello's Blog

The blog of a software developer and tester.

Levelling Up Security with the GitHub Secure Open Source Fund

After sitting on the secret for the last few months, I’m excited to finally share that back in May I was selected to participate in the second cohort of the GitHub Secure Open Source Fund through my maintenance of Polly. 🎉

The GitHub Secure Open Source Fund is a GitHub-lead initiative aimed at improving the security of open source software at scale by providing funding and resources to maintainers of popular open source projects. Big names in the software industry like 1Password, American Express, Shopify, Stripe, and Vercel help fund this initiative to enhance the security posture of open source software projects across many language ecosystems.

Many open source projects are fundamental building blocks of countless software applications, whether open source or proprietary, and the security of the entire software supply chain can be at risk if security vulnerabilities occur in these community-driven projects. For example, Polly is a dependency of .NET Aspire, which has become quite popular over the last year.

Through my participation in the GitHub Secure Open Source Fund, I gained access to a wealth of valuable resources, including expert guidance from GitHub staff and experts on best practices for securing open source software, as well as funding to help sustain my involvement in the development and maintenance of the many open source projects I contribute to.

Continuous Benchmarks on a Budget

Over the last few months I’ve been doing a bunch of testing with the new OpenAPI support in .NET 9. As part of that testing, I wanted to take a look at how the performance of the new libraries compared to the existing open source libraries for OpenAPI support in .NET, the most popular including NSwag and Swashbuckle.AspNetCore.

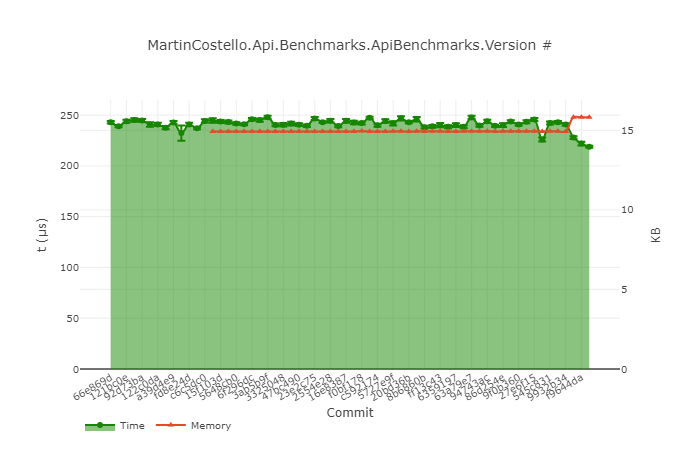

It’s fairly easy to get up and running writing some benchmarks using BenchmarkDotNet, but it’s often a task that you need to sit down and do manually when you have the need, and then gets forgotten about as time goes on. Because of that, I thought it would be a fun mini-project to set up some automation to run the benchmarks on a continuous basis so that I could monitor the performance of my open source projects easily going forwards.

In this post I’ll cover how I went about setting up a continuous benchmarking pipeline using GitHub Actions, GitHub Pages and Blazor to run and visualise the results of the benchmarks on a “good enough” basis without needing to spend any money* on infrastructure.

What's New for OpenAPI with .NET 9

Developers in the .NET ecosystem have been writing APIs with ASP.NET and ASP.NET Core for years, and OpenAPI has been a popular choice for documenting those APIs. OpenAPI at its core is a machine-readable document that describes the endpoints available in an API. It contains information not only about parameters, requests and responses, but also additional metadata such as descriptions of properties, security-related metadata, and more.

These documents can then be consumed by tools such as Swagger UI to provide a user interface for developers to interact with the API quickly and easily, such as when testing. With the recent surge in popularity of AI-based development tools, OpenAPI has become even more important as a way to describe APIs in a way that machines can understand.

For a long time, the two most common libraries to produce API specifications at runtime for ASP.NET Core have been NSwag and Swashbuckle. Both libraries provide functionality that allows developers to generate a rich OpenAPI document(s) for their APIs in either JSON and/or YAML from their existing code. The endpoints can then be augmented in different ways, such as with attributes or custom code, to further enrich the generated document(s) to provide a great Developer Experience for its consumers.

With the upcoming release of ASP.NET Core 9, the ASP.NET team have introduced new functionality for the existing Microsoft.AspNetCore.OpenApi NuGet package, that provides a new way to generate OpenAPI documents for ASP.NET Core Minimal APIs.

In this post, we’ll take a look at the new functionality and compare it to the exsisting NSwag and Swashbuckle libraries to see how it compares in both features as well as performance.

Look ma, no Dockerfile! 🚫🐋 - Publishing containers with the .NET SDK 📦

Containers have been a thing in the software ecosystem for a few years now, with lots of associated technologies and concepts - Docker, Kubernetes, Helm charts, sidecars and many more. Using containers simplifies the deployment of your application by reducing things down to a single artifact that you can deploy along with all the required dependencies, including the operating system, and everything should Just Work™️. It’s almost like shipping your whole machine off to production!

The cost for that simplicity of deployment is the steeper learning curve for you the developer to understand all these

additional concepts and technologies. In addition, you will likely need to revisit how you build your application, creating

a Dockerfile to produce a container image that contains everything you need to build and run your application.

The more complicated your build process, the more daunting this becomes - for example, if your application builds client-side assets with a JavaScript toolchain. In that case, you need to install Node.js, npm etc. in the container build image too so you can produce those assets. Things can quickly get complicated, and that’s before you even start thinking about things like layer caching, exposing ports, what user to run as and more. 😮💨

What if we could simplify a lot of that complexity and just build our application like we would if we weren’t using containers, but then just turn it into a container image? Well, the .NET 8 SDK allows us to do exactly this, meaning you can containerise your application and not need a Dockerfile at all! 🚫🐋

Plus, as a bonus, if building with GitHub Actions, we can leverage this support to attest the provenance of our container images with minimal additional effort. 🕵️🪪

.NET Native AoT Make AWS Lambda Function Go Brrr

Since 2017 I’ve been maintaining an Alexa skill, London Travel, that provides real-time information about the status of London Underground, London Overground, and the DLR (and the Elizabeth Line). The skill is an AWS Lambda function, originally implemented in Node.js, but since 2019 it has been implemented in .NET.

The skill uses the Alexa Skills SDK for .NET to handle the interactions with Alexa, and since earlier this month has been running on .NET 8.0.0.

I’ve been using a custom runtime for the Lambda function instead of the .NET managed runtime. The main reason for this is that it lets me use any version of .NET, not just the ones that AWS support. This has allowed me to not only use pre-release versions of .NET for testing, but it also enables me to use the latest versions of .NET as soon as they are released. The only disadvantage of this approach is that I have to patch the version of .NET being used once a month for Patch Tuesday, but I have automation set up to do that for me, so the overhead of doing that is actually minimal 😎.

As part of the .NET 8 release, the .NET team has put a lot of effort into improving the breadth of the capability of the native AoT support. With .NET 8, many more use cases are supported for AoT, making the performance and size benefits of AoT available to more applications than before. The .NET team at AWS has also been working hard on ensuring that the various AWS SDK libraries are compatible with AoT, with the various NuGet packages now annotated (and tested) as being AoT compatible.

With all these changes, I was curious to see how much of a difference AoT would make to the performance of my Lambda function, so I decided to try it out. In this post I’ll go through what I needed to change to allow publishing my Alexa skill as a native application, what I learned along the way, and the results of the changes to the function’s runtime performance.

TL;DR: It’s faster, smaller, and cheaper to run. 🚀🔥

Let’s dive in!

Upgrading to .NET 8: Part 6 - The Stable Release

Last week at .NET Conf 2023, the stable release of .NET 8 was released as the latest Long Term Support (LTS) release of the .NET platform.

With the release of .NET 8.0.0 and the end of the preview releases, my past week can be summed up by the following image:

Upgrading to .NET 8: Part 5 - Preview 7 and Release Candidates 1 and 2

This post is a bumper edition, covering three different releases:

I had intended to continue the post-per-preview series originally, but time got away from me with preview 7, plus there wasn’t much to say about it, and then I went on holiday for two weeks just as release candidate 1 landed. Given release candidate 2 was released just a few days ago, instead I figured I’d just catch-up with myself and summarise everything in this one blog post instead!

Release Candidate 2 is also the last planned release before the final release of .NET 8 in November to coincide with .NET Conf 2023, so this is going to be the penultimate post in this series.

Upgrading to .NET 8: Part 4 - Preview 6

Following on from part 3 of this series, I’ve been continuing to upgrade my projects to .NET 8 - this time to preview 6. In this post I’ll cover more experiences with the new source generators with this preview as well as a new feature of C# 12: primary constructors.

Upgrading to .NET 8: Part 3 - Previews 1-5

In the previous post of this series I described how with some GitHub Actions workflows we can reduce the amount of manual work required to test each preview of .NET 8 in our projects. With the infrastructure to do that set up we can now dig into some highlights of the things we found in our testing of .NET 8 itself in the preview releases available so far this year!

Upgrading to .NET 8: Part 2 - Automation is our Friend

In part 1 of this series I recommended that you prepare to upgrade to .NET 8 and suggested that you start off by testing the preview releases. Testing the preview releases is a great way to get a head start on the upgrade process and to identify any issues sooner rather than later, but it does require an investment of your time from preview to preview each month.

Even if you don’t want to test new functionality, you still need to download the new .NET SDK, update all the .NET SDK and NuGet package versions in your projects, and then test that everything still works (that’s already automated at least, right?). This can be a time-consuming process over the course of a new .NET release, and it starts to become harder to scale if you want to test lots of different codebases with the latest preview of the next .NET release.

What if we could automate some of this process so that we only need to focus on the parts where we as humans really add value compared to the mechanical parts of an upgrade?

In part 2 of this series I’m going to explain how I’ve gone about automating the boring parts of the process of testing the latest .NET preview releases using GitHub Actions.